I round up the most relevant AI-in-finance news - the deals being done, who’s rolling out what, and what’s actually working on the front lines

Anthropic is raising $10 billion at a $350 billion valuation

…nearly doubling in four months. JPMorgan launched a new advisory unit to help clients figure out AI. And Business Insider delivered a neat roundup of AI use cases across the industry.

This week, I want to talk about what future-proofing looks like and why it's so important right now.

In This Week’s Issue:

From The Trenches:

The brittle stack problem (and what future-proofing actually looks like)

News Digest:

Anthropic raising $10B at $350B valuation, IPO eyed for 2026

JPMorgan launches Special Advisory to share its AI playbook

How PE firms are actually using AI (hint: it's not chatbots)

This Week in AI M&A:

Accenture/Faculty ~$1B

Other Cool Stuff I've Read or Seen:

a16z's $15B raise, TechRepublic on enterprise AI gaps, MIT Sloan's 2026 trends, OpenAI's $20B+ ARR

From The Trenches

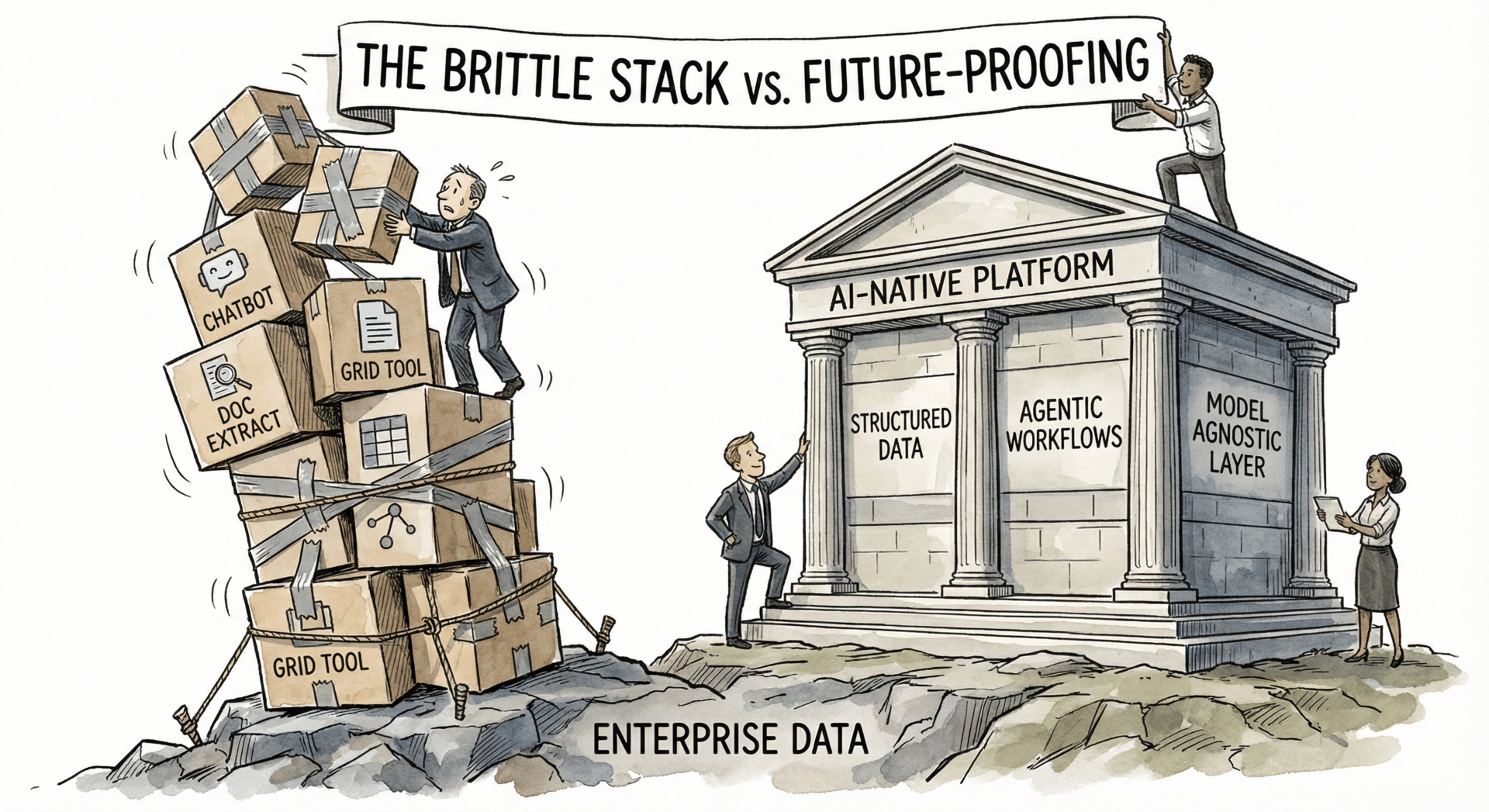

The Brittle Stack Problem

I saw a pitch deck from an AI consultant last week that stopped me in my tracks. Not because it was particularly impressive. And not even because they were charging $500k to $750k.

It was what they were actually selling. Four enterprise software products stitched together. This one extracts data, that one formats it, another one routes it somewhere else. Each tool has a $50k minimum buy-in. Then you're paying the consultant to integrate them all. And you're beholden to that consultant for who knows how long.

I spoke with the head of an AI task force at a regional bank who's evaluated over a dozen tools this year. His observation stuck with me:

“At the end of the day, when we use AI, it's not going to be 10 different logins. It's going to be one. It's going to sit at the OS level."

The problem with these duct-tape stacks is brittleness. You're at the whims of four different companies. If one changes their API, your workflow breaks. If one gets acquired or sunsets a feature, you're scrambling. If one raises prices, you're stuck. What happens when one of those four tools becomes obsolete in 18 months? You're back to square one, minus the $500k.

Be wary of cobbling together these solutions. The features that differentiated AI tools 12 months ago are already commoditized. Chatbots? Commoditized. Document grids? Commoditized. Basic extraction? Getting there. What's not commoditized is integrating all the actual work. That's the hard part.

And here's the other thing. The more tools you subscribe to, the more you have to stay on top of how the industry is evolving across all of them. That AI consultant? They're going to become a permanent fixture on your expense line. And they're not incentivized to move you onto the latest and greatest. They're incentivized to keep you on the stack they know how to support. You have to build for the future and stay flexible. They don't.

This mattered less when software lived in isolation. You bought a tool, it did its job, you moved on. But AI changes the equation. The connectedness matters now. Data flows between systems. Agents need to read from multiple sources and write to multiple destinations. If your stack is a patchwork of point solutions, you're building on sand.

So what does future-proofing actually look like?

Start with clean, structured data. This sounds boring, but it's the foundation everything else sits on. The models will keep changing. GPT-6, Claude 5, whatever comes next. But well-organized data that agents can reliably query? That's valuable regardless of which model you're running. If your data lives in a graveyard of 50 versions of every file in Box, no AI is going to save you.

Then think about agent-native software. You're going to hear this term a lot in 2026. It basically means software that's been configured for agents to use well. Not just a chat interface bolted on top. Actually designed so that AI agents can read from it, write to it, and take actions within it. The difference matters. An agent trying to navigate software built for humans is like asking someone to drive a car using only voice commands. It works, sort of. But it's not how the system was designed to be used.

The winning approach is platform agnosticism. One system that structures your data, connects to whatever LLM makes sense, and doesn't lock you into a vendor's roadmap. You want to be able to swap out the model layer without rebuilding your entire stack. That flexibility is going to matter a lot as this space evolves.

This is what we've been building towards at DealSage. A foundation that doesn't break when the landscape shifts. Because it will shift. Probably faster than any of us expect.

News Digest

Anthropic Raising $10B at $350B Valuation, IPO Eyed for 2026

Anthropic is in talks to raise $10 billion at a $350 billion valuation, nearly doubling its market cap in just four months. Coatue Management and GIC, Singapore's sovereign wealth fund, are leading the round, which is expected to close in the coming weeks.

This is separate from the $15 billion that Nvidia and Microsoft committed in November (a "circular" deal where Anthropic buys $30 billion of Azure compute running on Nvidia chips). It's also Anthropic's third mega-round in a year. In March they raised $3.5 billion at $61.5 billion. In September, $13 billion at $183 billion. Now $350 billion. That's a 6x valuation jump in under 12 months.

The details:

$10B raise at $350B pre-money valuation (led by Coatue, GIC)

Nearly doubles $183B valuation from September (4 months ago)

Separate from $15B Nvidia/Microsoft strategic commitment

Claude Code generating $500M+ run-rate revenue

300,000+ business accounts

Expects to break even by 2028 (ahead of OpenAI)

Potential IPO later in 2026

Why it matters: Anthropic is now valued at $350B. OpenAI is reportedly seeking $100B at up to $830B. These two companies alone would be worth over $1 trillion.

My take: The break-even timeline is the buried lede. Anthropic expects profitability by 2028, ahead of OpenAI. Their focus on enterprise is likely to lead to more immediate results. I’m a huge Anthropic stan so this looks cheap to me.

JPMorgan Launches Special Advisory to Share Its AI Playbook

JPMorgan quietly launched Special Advisory Services this month, a new unit that shares the bank's internal playbooks on AI, cybersecurity, digital assets, and more with top clients. The group is led by Liz Myers, a 30-year JPM veteran and Global Chair of Investment Banking.

The origin story is telling. Clients kept asking how JPMorgan itself navigates AI. What tools do you use? How do you think about cybersecurity? Jamie Dimon decided to formalize those conversations into a dedicated offering. The bank isn't charging fees initially, though that may change for time-intensive engagements.

The details:

New advisory unit sharing JPM's internal expertise

Led by Liz Myers (Global Chair of Investment Banking)

Advisory areas: AI, cybersecurity, digital assets, geopolitics, healthcare, supply chain

Target clients: IPO prospects, long-tenured relationships, mid-sized firms

Not charging initially (fees possible for intensive projects)

Why it matters: When clients start asking their bank for AI advice instead of just deal advice, that's a signal about where value is shifting.

My take: JPMorgan is productizing its operational expertise. That's interesting. The bank spent $18 billion on tech last year and has 200,000+ employees with generative AI access. Now they're packaging those learnings for clients. If your competitive advantage includes "we figured out AI before everyone else," why not sell it?

Latest Roundup of AI in Finance Use Cases

Business Insider published a comprehensive roundup of AI adoption across Wall Street this week. The banking numbers are impressive on the surface: JPMorgan has 200,000+ employees with gen AI access, Citi logged 7 million AI uses in 2025. But most of the wins are internal. Code generation. Legacy system maintenance. Developer productivity.

The PE use cases are more interesting. EQT's Motherbrain is genuinely changing how they source deals, using AI to identify targets before they hit the market. Blackstone is building enterprise-wide search across portfolio companies and leaning hard into insurance. These aren't chatbot deployments. They're workflow transformations.

The details:

Banks: Mostly internal productivity (code gen, dev tools, legacy maintenance)

EQT: Motherbrain AI changing deal sourcing, identifying targets pre-market

Blackstone: Enterprise search across portfolio, insurance AI play

Morgan Stanley: 72% of interns use ChatGPT daily (for what, exactly?)

Goldman: AI driving "limited reduction" in some roles

Why it matters: Banks are optimizing what they already do. PE firms are trying to do new things. Different priorities, different use cases.

My take: The PE playbook is worth watching more closely. EQT isn't using AI to write emails faster. They're using it to find deals their competitors haven't seen yet. That's a structural advantage.

This Week in AI M&A:

Accenture acquires Faculty for ~$1B (Jan 6) - UK-based AI services firm with 400+ specialists joins Accenture. Faculty CEO Marc Warner becomes Accenture CTO, joining Global Management Committee. Faculty works with OpenAI and Anthropic on AI safety, built NHS Early Warning System during COVID. Investors say deal grants "unicorn" status. When your acquisition target's CEO becomes your CTO, that's not a tuck-in. That's a statement.

Other Cool Stuff I’ve Read of Seen This Week:

a16z raises $15B, largest VC fundraise ever (Jan 9) - 18% of all US VC dollars allocated in 2025. $3.4B for AI, $1.2B for "American Dynamism" (defense, aerospace). When one firm captures a fifth of the market, that's not diversification.

TechRepublic: Only 8.6% of companies have AI agents in production (Jan 6) - Survey of 120,000+ enterprises shows 63.7% have no formalized AI initiative. Nearly 60% say legacy integration is the primary barrier. The gap between "using AI" and "getting value from AI" remains a canyon.

MIT Sloan: Five AI trends for 2026 (Jan 6) - Agents will fall into the "trough of disillusionment" this year. GenAI shifting from individual tool to enterprise resource. The hype cycle continues its inevitable march.

OpenAI exits 2025 at $20B+ ARR (Jan 9) - CFO Sarah Friar: "We'll exit this year at a little bit more than $20 billion." That's 10x from 2023. Revenue is real. Profits remain theoretical.

Acquisition Intelligence is a weekly newsletter on AI in M&A for finance professionals, private equity investors, investment bankers, corp dev teams, and deal-makers.

For questions, feedback, or to share what you're seeing in the market, reply to this email.

P.S. I'm Harry, co-founder of DealSage. We're building an AI-native deal intelligence platform to help professionals turn their institutional knowledge into better decisions. If you're curious what we're up to, check out dealsage.io or just reply here